My February 2003 column for Storage magazine focused on the surprising difficulty of measuring storage utilization. I wrote:

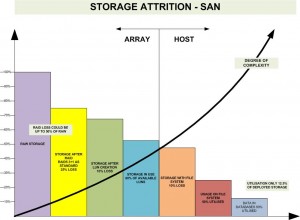

“A true measurement of utilization would reflect every layer of usage metrics – from raw disk in a shared array to used storage within files. Raw storage for each new frame of reference is contained within the used storage measured above it, so low utilization is compounded as we move deeper into the stack.”

In that column, I suggested that utilization of any resource was based on just three metrics:

- Raw

- Usable

- Used

But this is confounded by the frame of reference being measured. It’s trivially simple to determine the raw, usable, and used capacity for a storage array, server, or database. But what happens when one tries to measure storage utilization all the way through the stack?

When vendors take up this challenge, the discussion tends to get diverted into a cul-de-sac that presents their products most favorably, as was the case of Chuck Hollis’ comparison of his EMC CLARiiON to HP’s and NetApp’s storage products. Was Chuck wrong? Was HP right? Or was it NetApp that has the best utilization? One thing is certain, we’re getting nowhere if we can’t agree on some basic terminology.

Credit Storage Architect Chris Evans with seeing the problem for what it was. He noticed the matryoshka effect and put together a “waterfall” diagram, showing how low utilization is compounded as we move down the stack. He also notes that complexity rises as we move to the right, something I never called out.

We were both onto the same thing, though, and my study of storage utilization (published in the April 2003 issue) supported his suggestion that the raw to used ratio might be as little as 10:1 on average. At the time, I even put together a similar waterfall chart, but it was never published outside the company I worked for (that I know of).

So I fully and enthusiastically support Chris’ ideas on this topic! Let’s come up with some standard metrics for the various places that storage can be “raw, usable, and used”:

- Disk drive units often have excess space (raw), and this is especially true of enterprise flash units

- RAID sets definitely follow this pattern

- Storage arrays themselves can have unused usable space (as noted by Marc Farley)

- Storage virtualization can add another layer of utilization loss

- On the host side, we must consider volume managers which can perform all the functions of an array

- Filesystems also have raw, usable, and used space

- As do applications that manage storage like databases

- Add in capacity management technologies like compression and deduplication to really mess things up

- Finally, server virtualization can sit above or below these server variables, and virtual machines themselves often have unused space.

Simply put, there are a lot of places for a few unused bytes to hide. Anyone want to bet that 10:1 is optimistic? And we’re only talking about capacity utilization – there are whole other worlds of power efficiency and performance to consider as well…

Leave a Reply