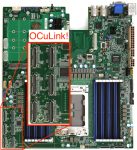

With the advent of AMD Threadripper and Epyc, we are about to see an explosion of PCIe lanes in the pro-sumer and datacenter market. Although many of those lanes will be taken up by conventional PCIe cards, some will be used for SSD’s (M.2 and U.2) or for external connectivity. This is where OCuLink might finally take off: As an AMD alternative to Thunderbolt for external PCIe peripheral connectivity.

PCIe

Here’s Something Your Raspberry Pi Can’t Do: Gigabit Ethernet and SATA in the Olimex A20-OLinuXIno-LIME2

I’ve really enjoyed experimenting with the Raspberry Pi, and have even deployed a few as UNIX servers in my home and office network. The quad-core performance of the latest Pi models is awesome, but serious I/O limitations remain. With just one USB 2.0 interface shared for all network and storage operations, you aren’t going to […]

Adding a Second Ethernet Port to an Intel NUC via Mini PCIe

As I mentioned in my previous post about Raspberry Pi power monitoring, I recently built a VMware vSphere “datacenter” from three Intel NUC mini PC’s. One limit of the NUC is that it has just one Ethernet port. But there’s a Mini PCIe slot inside the fourth-generation NUC that can be used to add a second Ethernet NIC!

Musing: Could We Replace Ethernet With PCIe?

Greg “EtherealMind” Ferro recently “mused” that it might be a good idea to replace PCI Express (PCIe) inside servers or rack-scale infrastructure with Ethernet. But this seems to be the exact opposite of the direction the industry is headed. Rather than replacing PCIe with Ethernet, companies like Intel seem set on replacing short-range Ethernet (in rack-scale systems) with PCIe!

The Rack Endgame: Open Compute Project

On reading my thoughts about the evolution of enterprise storage, many pointed out that this looks an awful lot like the Facebook-led Open Compute Project (OCP). This is entirely intentional. But OCP is simply one expression of this new architecture, and perhaps not the best one for the enterprise.