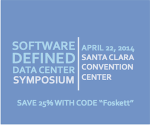

I’ve written and spoken quite a bit on the “software-defined” future, what it means and how it will come about. Although it seems like a marketing buzzword to some, I feel it is a fairly accurate description of the future of the enterprise and service provider data center. That’s why I’m working to organize the next Software-Defined Data Center Symposium, and am happy to announce that it will be held in Santa Clara, CA on April 22, 2014.

Coho Data

Making a Case For (and Against) Software-Defined Storage

Everyone is talking about “software-defined†everything lately, so it was only a matter of time before industry buzz turned to software-defined storage. VMware and EMC really stoked the flames with a constant barrage of marketing directed in this direction. But how exactly do you software-define storage? And what does this mean?

Infographic: Datacenter History In Lego

You might remember my October post, Datacenter History: Through the Ages in Lego. Now it’s available as a handy infographic, thanks to my friend Aditya Vempaty of Coho Data. It’s pretty cool how this turned out – I think he’s got a real talent for this kind of thing!

Scaling Storage In Conventional Arrays

It is amazing that something as simple-sounding as making an array get bigger can be so complex, yet scaling storage is notoriously difficult. Our storage protocols just weren’t designed with scaling in mind, and they lack the flexibility needed to dynamically address multiple nodes. So my hat is off to these companies and others who have come up with clever ways to maintain compatibility while scaling out beyond the bounds of a single storage array.

Scale-Out Storage Field Day?

Having wrapped up Storage Field Day 4 this week, it seems that the theme was scaling storage. Delegates learned about scale-out storage from CloudByte, Coho Data, Nimble Storage, Overland Storage, Avere Systems, Gridstore, Oxygen Cloud, and Cleversafe.