I was recently given an old HP MediaSmart EX470 server along with some other junk hardware. Although it has no graphics, a slow single-core AMD Sempron CPU, and just 512 MB of RAM, I was able to revive it quite satisfactorily. Here’s how I upgraded the hardware and software.

Computer History

Considering the history of computing, from the enterprise to the home.

ZFS Is the Best Filesystem (For Now…)

ZFS should have been great, but I kind of hate it: ZFS seems to be trapped in the past, before it was sidelined it as the cool storage project of choice; it’s inflexible; it lacks modern flash integration; and it’s not directly supported by most operating systems. But I put all my valuable data on ZFS because it simply offers the best level of data protection in a small office/home office (SOHO) environment. Here’s why.

Co-Processors, GPGPU, and Heterogeneous Computing

I’ve been thinking a lot lately about microprocessors, from the many-core CPUs that AMD and Intel introduced recently to the massively scalable GPGPU processing that’s taking machine learning by storm. After years of consolidation on commodity x86 CPUs, it seems that the computing paradigm is turning again to specialized offload processors. This trend towards heterogeneous computing will change the face of hardware, from mobile devices to the datacenter.

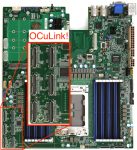

What is OCuLink?

With the advent of AMD Threadripper and Epyc, we are about to see an explosion of PCIe lanes in the pro-sumer and datacenter market. Although many of those lanes will be taken up by conventional PCIe cards, some will be used for SSD’s (M.2 and U.2) or for external connectivity. This is where OCuLink might finally take off: As an AMD alternative to Thunderbolt for external PCIe peripheral connectivity.

Dell, Wall Street, Magic Beans, and the End of EMC

As of today, EMC Corporation is no longer an independent company. Who thought we would see this day? From now on, EMC is simply a brand for parts of Dell’s Infrastructure Solutions and Services businesses. This marks a major shift in the enterprise storage world, for IT, and perhaps for American business in general.